AI Content Detection on Adult Platforms: How the Tools Work, Where

Adult platforms are leaning harder on AI detection, but the technology remains better at filtering obvious violations than interpreting context.

Platform News & Analysis

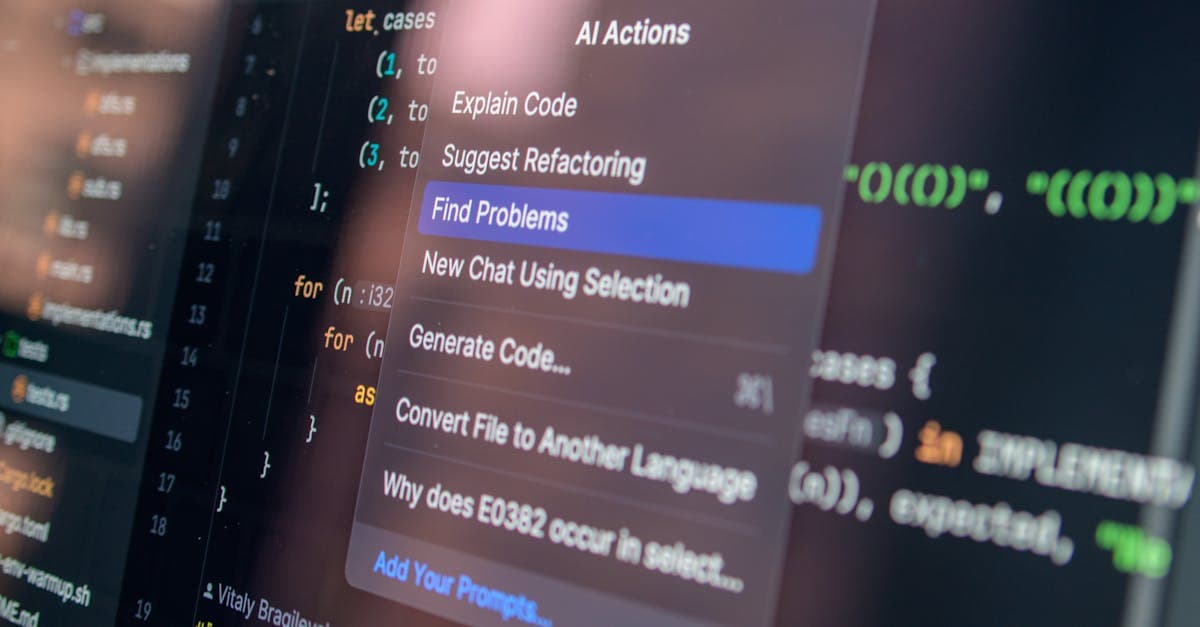

AI detection tools are becoming central to how adult platforms moderate uploads, but the phrase itself covers a wider set of technologies than most creators realize. Some systems identify nudity or explicit imagery. Others look for minors, deepfakes, copyrighted material, or suspicious patterns of reuse. A few tools try to infer whether content is synthetic, consented, or policy-safe. None of them are fully reliable on their own.

That limitation matters because adult platforms do not just need "detection." They need defensible enforcement. A model that catches obvious violations but misses context can create false confidence. A system that over-flags legitimate content can slow uploads, freeze payouts, and frustrate creators. The hard part is not finding software that can score content. It is building a moderation pipeline that knows what to do with the score.

What The Models Actually Do

Most detection systems combine several techniques. Computer vision models classify visual content frame by frame. Hashing systems match uploads against known content. Text models scan captions and metadata for policy triggers. Behavioral models flag accounts that suddenly change posting patterns, upload volume, or geographic signals. The output is usually a risk score, not a final decision.

That layered design is intentional. A single model will fail too often, but a stack of weaker systems can catch different types of risk. One tool may be good at identifying explicit imagery. Another may be better at spotting reuse. Another may detect anomalies in account behavior. When combined, they produce a useful moderation signal even if none of them is perfect in isolation.

The catch is that these tools usually work best on standard cases. If the content is strange, stylized, cropped, filtered, or edited, performance drops. That is why detection systems look stronger in policy decks than they do in real creator workflows.

Where They Fail

False positives are the biggest operational problem. A detector may flag lingerie, bodybuilding, makeup tutorials, or art photography because the visual patterns overlap with explicit content. For a creator, that can mean review delays or content removal for something that was clearly within policy. For the platform, it creates support load and trust erosion.

False negatives are the opposite problem. Bad actors can sometimes evade detection with frame changes, color filters, compositing, or synthetic overlays. The more adversarial the environment, the more the model becomes a moving target. Adult platforms are especially exposed because the incentive to evade moderation is strong and the content economy rewards speed.

Context is the hardest variable of all. A face may resemble a known person without being that person. A clip may be synthetic without being unlawful. A body may appear underage while the person is verified as an adult. AI systems are still poor at resolving those cases without additional review. That is why human escalation remains necessary.

The Economics Of Detection

AI detection is not just a safety tool. It is a cost tool. Every hour a human moderator does not spend on obvious violations saves money. Every automated filter that reduces spam and repeat offenders lowers the burden on support teams. If a platform handles millions of uploads, the savings can be material even if the model is imperfect.

The trade-off is that better detection usually means higher infrastructure cost. Running classifiers at scale, storing risk scores, and routing escalations all require compute and engineering. Platforms have to decide where the marginal dollar is best spent: on better models, faster human review, or more explicit policy boundaries. In most cases, the answer is a mix of all three.

Creators rarely see that cost structure directly, but they feel its consequences through delays and enforcement changes. When a platform tightens AI detection, account processing can slow down. When it relaxes too much, illegal or infringing content leaks through and triggers a stricter response later. Either way, moderation tech has a real financial footprint.

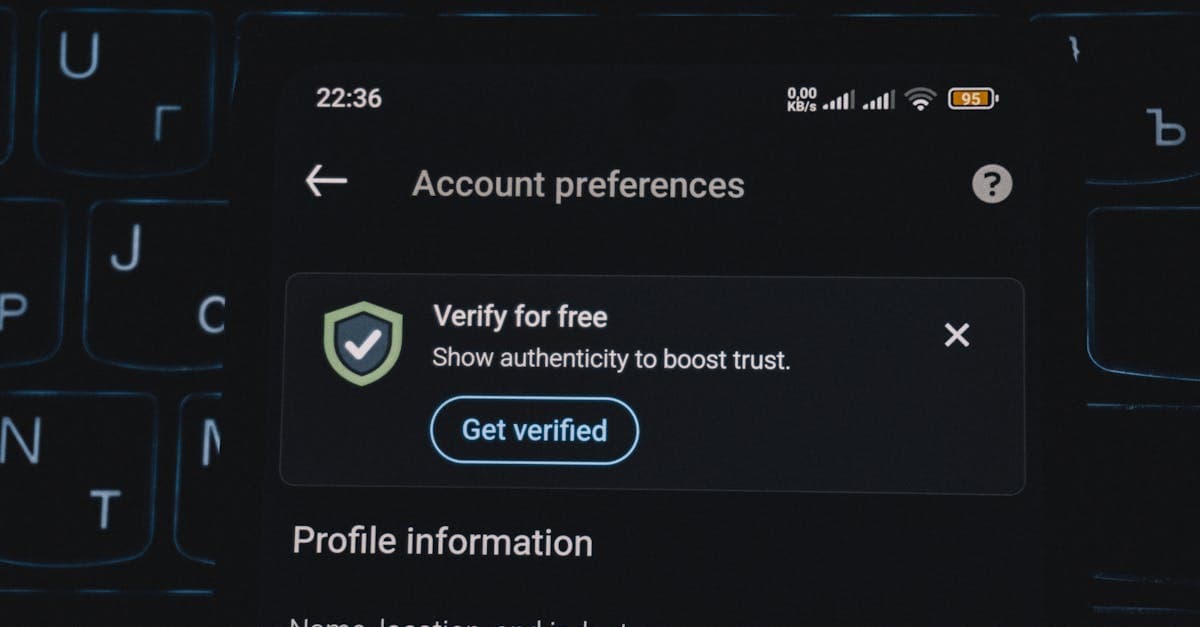

The Next Layer Is Provenance

The next generation of detection is likely to focus less on "what does this look like" and more on "where did this come from." Provenance signals, content signatures, creator verification, and source metadata will matter more as synthetic media gets harder to spot by eye. Platforms need to know whether a file is original, reused, licensed, or generated.

That shift is particularly important for adult platforms because consent and identity are core trust issues. A system that can verify origins and route edge cases for review is more valuable than one that merely says "this looks explicit." The future of moderation is less about banning obvious content and more about validating legitimacy at scale.

There is also a strategic angle. Platforms that build credible provenance systems may be better positioned with payment partners, regulators, and advertisers than platforms that rely on blunt filters alone. Detection will still matter, but provenance may become the stronger trust signal.

The unresolved question is accountability. If an AI tool flags content incorrectly, the creator needs a human explanation, a correction path, and confidence that the same mistake will not repeat indefinitely. Detection systems will become more common, but their legitimacy will depend on appeal quality. A platform that automates enforcement without improving review transparency simply moves the bottleneck from moderators to models.

That is why appeal logs, model updates, and human override rates will become important trust signals for creators.

That transparency gap will decide adoption.

What This Means

AI detection is improving, but it is not close to solving adult moderation on its own. The tools are best understood as assistive filters that reduce obvious risk and make human review more efficient. They are not judges, and they are not substitutes for policy.

What to watch next is the move from content classification to provenance and consent verification. The platforms that can show they know what content is, where it came from, and who authorized it will have the strongest compliance story. That is where the real competition is heading.

The hardest part of that shift is trust. A platform can say it has better detection, but creators will only believe it if false positives fall and decisions become easier to understand. In other words, the model does not just have to be smarter. It has to be more legible.

That is why the most useful AI systems in this space will probably be the ones that assist humans rather than replace them. They can pre-score uploads, cluster suspicious accounts, and route the riskiest cases to review. But they still need human judgment to handle consent, identity, and context.

The business case is solid because every minute saved in moderation lowers operating cost. The creator case is also solid because faster decisions reduce payout friction. When both sides benefit, adoption becomes much easier to defend.

The next generation of tools may also be expected to produce audit trails. If a platform can show why a file was flagged, how the decision was made, and what evidence supported it, the moderation story becomes more defensible to both creators and regulators.

That is where AI moderation is headed: less like a magic filter, more like a structured evidence system.

That kind of evidence system matters because it can be audited by people outside the moderation team. Payment partners want proof that the platform is serious. Regulators want evidence that the rules are enforceable. Creators want a path to understand why something was flagged. Better records help all three.

The most realistic future is still human-led, but with far better pre-screening and much clearer records. That should reduce noise, improve turnaround times, and make the entire moderation process easier to defend when things go wrong.

That shift gives platforms a better operational story and creators a better experience. It does not eliminate moderation disputes, but it makes them easier to explain and easier to resolve.

That is the direction the market is moving because it fits every stakeholder a little better. Creators get faster answers, platforms get better control, and reviewers get clearer signals. In a category built on risk, that is a meaningful improvement.

That is the direction the market is already taking: more evidence, more traceability, and fewer decisions that feel like black-box punishment. In a business where trust is fragile, that shift matters as much as raw detection accuracy.

The reason this matters is simple: every extra layer of explainability lowers the chance that creators treat moderation as arbitrary. A platform that can show its work is much easier to trust than one that simply disappears content and leaves people guessing.

That kind of trust has commercial value. It reduces escalations, limits payout friction, and makes the platform easier to support with stronger payment and compliance relationships.

In that sense, AI moderation is becoming a governance tool as much as a filtering tool.

The business case is straightforward: fewer false alarms, lower review cost, and more predictable policy enforcement. The governance case is just as clear: a platform that can explain its decisions is easier to trust than one that only says no.

Get the pulse, weekly.

Platform news, creator economy trends, and industry analysis — delivered every Friday.